Next week, Nvidia has a virtual keynote which is designed to be the gaming equivalent of the AI/machine-learning/deep learning GTC they held earlier this year, where the Ampere architecture was announced via the A100 AI/ML/DL accelerators. That GPU, while not directly the gaming version (no raytracing hardware and various other differences for the workloads of a GPU server), had a lot of exciting information that led to speculation about the Geforce version of the Ampere architecture.

With the event next week and leaks being hot and heavy, Nvidia came out with a video today that features the engineers at Nvidia talking about the mechanical engineering that goes into a graphics card, including the cooling solution and power delivery, in the process very casually confirming two large rumors that have been swirling about the new Geforce lineup – the cooler design that was pictured months ago and the use of a new, proprietary 12-pin power connector with smaller wire gauge.

As a reminder, the cooler design that is floating around is this:

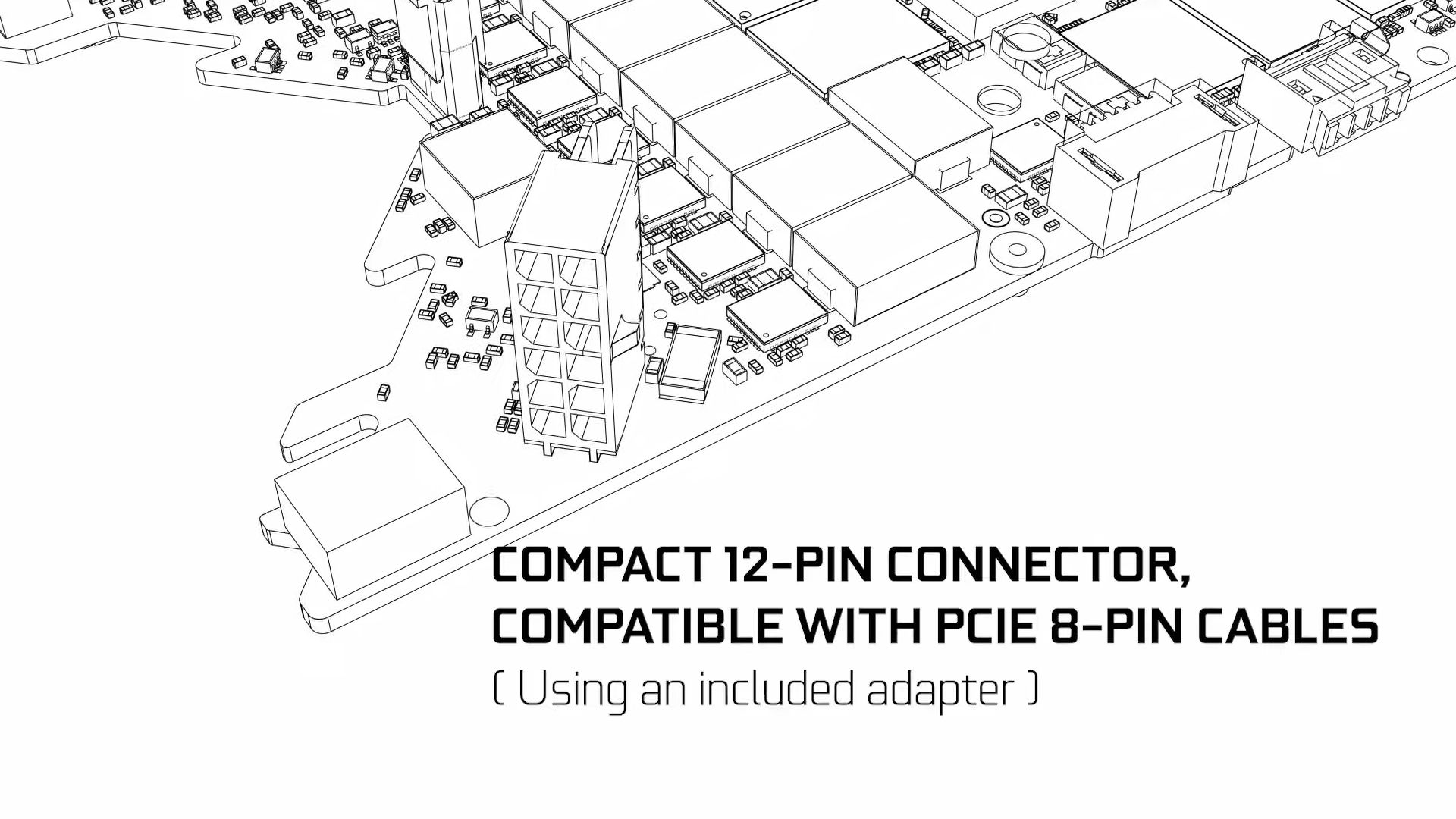

The 12-pin connector is a more interesting development. A proprietary connector pushed by a GPU manufacturer is a troubling development in some ways (will your power supply have one, adapters are going to need to ship with the cards (which Nvidia confirmed will be the case in the new video), if it becomes a standard, do competitors get shut out?) but it also grants some flexibility (the new connector is designed to be positioned vertically on the PCB to take up less real estate on the board) and the inclusion of an adapter in the box should help things, as will the rumor that a lot of add-in board partners (your MSIs, ASUS, etc) will still use standard PCI-Express 6 and 8-pin connectors to deliver power to their cards, although some Nvidia-exclusive partners (EVGA) will likely go in on the 12-pin connector with Nvidia.

But, with the video from Nvidia out, now we can compile the rumors and confirmed details to arrive at something that looks like what we should hear next week.

Confirmed Details:

12-Pin Power Connector: Proprietary and designed by Nvidia, this connector uses thinner wire gauge to stack 12 pins into a micro-fit Molex connector that is smaller and is stacked vertically on the circuit board (with 2 pins in contact and a tower of 6 pins high emerging from the board).

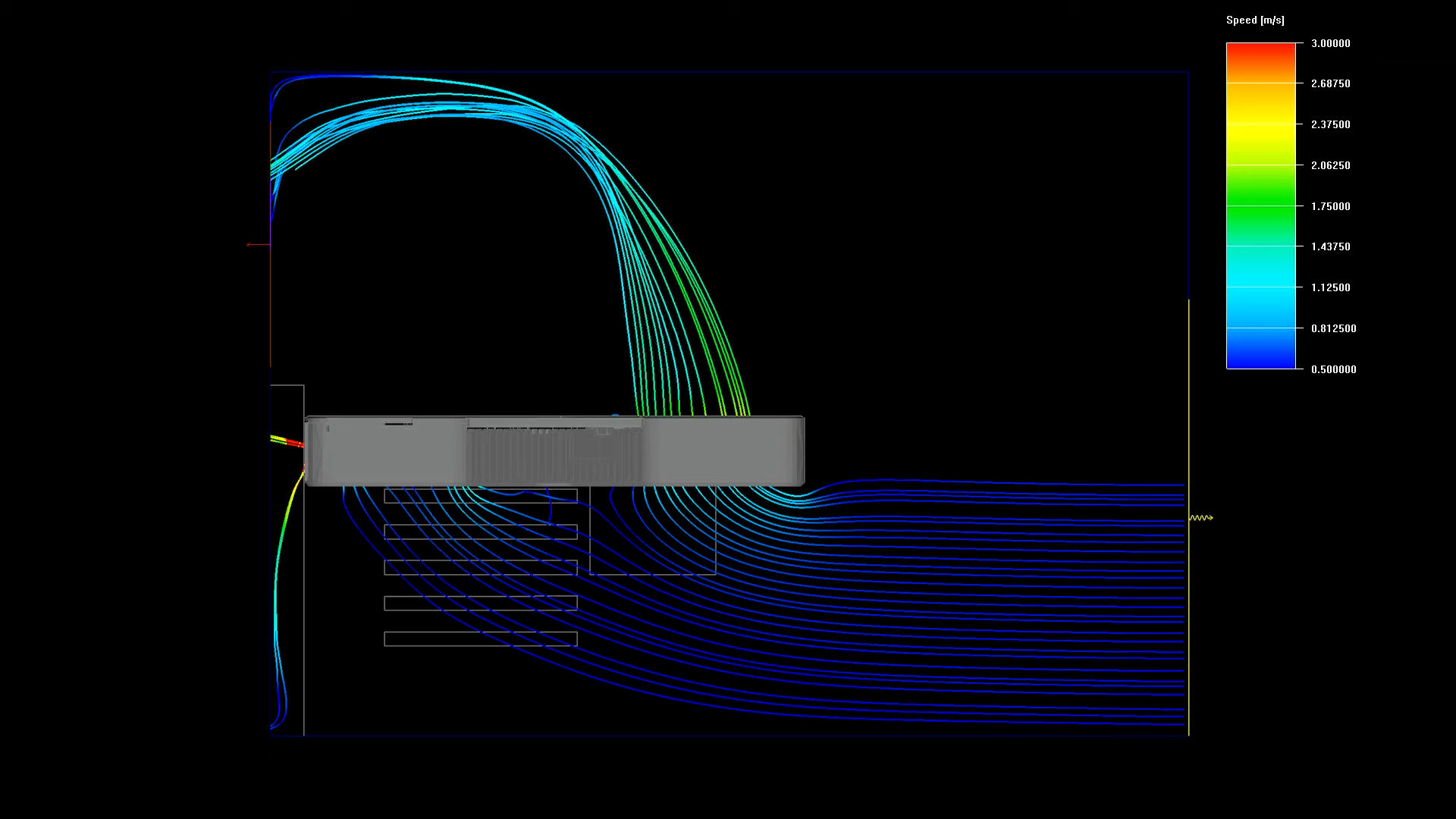

Cooling Design: The leaked PCB and cooler shots came true, and oh boy, it is very interesting. The design with fans on both sides is meant to be a sort of help to move air (and heat) through the heatsink and out of the card and system. However, with the images from the video today, we now know how it works, and it is a bit…bothersome.

My speculation was that the fans would both face inward towards the card, blasting air through the heatsink and venting around the heatsink and out the back IO plate of the card. However, the Nvidia model of airflow in their video indicates both fans face the same direction, with the fan over the PCB blowing air down onto the PCB and out the back of the card, and the second fan on the back of the card pulling air through a heatsink at the front and pushing it out the back of the card, which will blast it directly at the CPU in most cases, or at the motherboard in a vertical mount situation (smothering the heat behind the graphics card and convection slowly causing heat to rise to the CPU). It has a theoretical basis that is sound (it moves more heat away from the GPU, pushing to the CPU means a CPU cooler can scoop up the heated air and push it further to the exhaust of the system case, using such a fan placement allows some air to escape through natural convection) but it may also cause increased heat for your CPU, which, with modern boost clocks, might pose a performance reduction in systems with less-than-ideal cooling. Certainly an edge case, but one worth contemplating!

The PCB Design Is…Odd: Most graphics cards and circuit boards in general are boring shapes – squares and rectangles, almost entirely. The design is made that way because it is an easy shape which the base materials are commonly manufactured in and easiest to cut into, and because there’s not really any meaningful purpose to making circuit boards into weird shapes unless it serves a specific purpose, like fitting a small form-factor case for a device.

Well…the new Geforce lineup and the cooler design prompts a different idea – a short, stubby and compact PCB, with a V-shaped cutout at the end. Here is an image that shows it from the Nvidia video, rendered in CAD:

This serves a few purposes, but also causes some amount of complication. The intended purpose is for cooling – by cutting away the PCB to leave an unobstructed path for the back fan through a heatsink, you can increase the heatsink size by more, since it doesn’t need to account for a thick PCB, and then you can also gain free airflow through the heatsink, which doesn’t ricochet off the PCB and keep as much heat tight to the card. However, it also increases the thermal density of the graphics card by a lot. The CAD design shown above also indicates that the card design is incredibly dense, with voltage regulation modules stacked tightly to the card’s edge, with VRAM immediately next to it. In a normal graphics card design, there is a fair amount of space between those components, allowing the dense heat of the VRM to be dealt with further away from the RAM and GPU, theoretically resulting in better temperatures. In the new Geforce design, these parts are right on top of each other, with only millimeters of space between them, which may have some negative effects on temperature. However, the design tradeoff seems to have been judged as worthwhile to Nvidia, so while some skepticism is warranted, packing the hardware densely and engineering to reduce crosstalk seems to have been worth the tradeoff of thermal performance gained by having an unobstructed fan and heatsink that removes heat from the card altogether.

And Now, The Rumors:

GDDR6…X?: A Micron factsheet on their new GDDR6X memory calls out that the new Geforce lineup uses it, which is surely an odd leak to have. I’d say this is probably true, but Nvidia hasn’t confirmed. GDDR6X is just a faster version of GDDR6, similar to the GDDR5X used on the Geforce GTX 1080 Ti and what that was in relation to GDDR5. The signaling rate is vastly improved, though – GDDR6 in use on the Geforce RTX 2080 Ti has a signaling rate of 16 Gigabits per second per pin, where the GDDR6X spec from Micron shows a 21 Gbps per pin signaling rate. The exciting thing this allows is massive bandwidth – Micron’s factsheet for the product shows that on a 384-bit memory bus (the one expected on the RTX 3090), GDDR6X has an effective data rate of 1,008 GB/s, an increase of over 60 percent more than the RTX 2080 Ti and making it the second consumer GPU to break a decimal terabyte per second of memory bandwidth, after the disasterpiece Radeon VII from early 2019 AMD. That’s not the only memory changes, however…

More RAM, More Better: The biggest rumor as of late is one that just involves the falling prices of GDDR6 and speculates that based on this, Nvidia would be wise to increase memory allotments on the upcoming cards, especially since the upcoming game consoles from Sony and Microsoft feature 16 GB of total system memory, which will likely increase memory demands in the long term for games. The rumor mill has speculated in an expected and unexpected direction, with the RTX 3090 rumored to use the full memory controller instead of cutting down to 11 channels, and then doubling the memory per channel, resulting in a 24 GB graphics card, with the RTX 3080 taking a different approach and using a 10-channel memory controller and 10 GB of RAM tied to that, an increase over the 8 GB in the RTX 2080. 10 GB is likely to be a good sweet-spot compromise, as the Xbox Series X has 10 GB of full-speed GDDR6 with the remaining 6 GB at a slower speed, so the RTX 3080 would be a perfect match for the amount of memory that is most likely to serve as VRAM in the Xbox, at least. Meanwhile, a 24 GB card is overkill, but would offer incredible performance at higher resolutions, including 4K and (if supported on the card) 8K resolutions, and would make the RTX 3090 highly useful in some non-gaming applications that use GPU compute and have need for lots of memory.

Performance as Much as 70% Higher than a 2080 Ti?: This one I find hard to believe because Nvidia has made a ton of money by squeezing out incremental upgrades, with the RTX 2080 Ti itself offering only a 30% improvement over the 1080 Ti, at most. However, there are a lot of reasons to believe that Nvidia has something on their hands – they will have some competition with Big Navi from AMD, the new console generation means mainstream eyes will be turned in that direction, and a full-node process shrink (we’ll revisit this in a moment) should result in Nvidia being able to shrink down the raytracing hardware that occupied so much of the Turing die, offering more power there in a smaller surface area and being able to improve CUDA core counts more drastically than what the Turing lineup brought. The rumored CUDA core counts that I’ve seen (for comparison, a 1080 Ti has 3,584 CUDA cores, the 2080 Ti has 4,352 cores, and the 3090 is rumored to have 5,248 cores) is a larger increase, but one that should yield improvements in clock speeds when tied to a smaller process node and should result in a larger jump in performance. Couple that with faster memory, better cooling for higher boost clocks, and more power delivery, and the performance number, while crazy high, could be a theoretical maximum. But that depends on one other factor…

What Process Node Is Ampere Gaming Using?: The A100 Ampere accelerator is using TSMC’s 7nm process, which is a great process that is proven through AMD’s recent product lineups and the mobile SOCs that use it (most notably, the iPhone 11 hardware). However, the news in 2019 was that Nvidia had entered an agreement with Samsung to have them manufacture GPUs for Nvidia using their 7nm process – which is slightly worse, but doesn’t have as much competitive demand. However, as news came out about low yields on Samsung’s 7nm node, Nvidia had talked publicly about placing a volume of orders with TSMC. Now, given all of this, it would have seemed like a slam dunk that Nvidia would be using TSMC 7nm for Ampere gaming as well.

However, the deal with Samsung still exists, and Nvidia has confirmed publicly that they are still using Samsung for some components still. Most rumors that have come out since have indicated that Samsung would be making the Ampere gaming GPUs, perhaps not on 7nm, but instead on their 8nm process (which is actually a derivative of their 10nm process, because the size number is confusingly a marketing term rather than an objective size!). Some speculation is that Nvidia might even use Samsung’s 5nm process, as it is design-compatible with their 7nm process and would not require the redesign that 8nm would, allowing the card to remain on-schedule after being designed for Samsung 7nm and having that process become unviable due to yield rates. Yield rates can be worked around too – a low yield rate is a cost Samsung bears as being unable to provide the full value of a silicon wafer to the purchaser, and assuming Nvidia taped-out the Ampere gaming parts months ago, as would be a standard timeline, there is an amount of manufacturing lead time that can be leaned upon to provide more working parts. It also may allow them to bin parts for power efficiency at great detail, holding high efficiency parts for Quadro workstation cards while using the leaky, low power efficiency GPUs for Geforce. Speaking of which…

Gefore RTX 3xxx Cards Are Going to Use How Much Power?: The biggest rumor that drew concern is that the Ampere gaming cards are going to use a ton of power. The biggest part of the initial 12-pin connector rumors wasn’t that a new connector itself was altogether bad, but that it signaled Nvidia needed something special to supply enough power to the board. In fact, a leaked Seasonic modular cable for the 12-pin connector suggests it be used with 850w or higher power supplies – which may just be down to model numbers that are compatible. The total board power (all components included) has been rumored to be as high as 400 watts! At that rate, the card is going to be incredibly hot even on an efficient process, and coupled with the reference PCB design’s density of components, that could potentially be very bad. For reference, an 8 pin PCIE power connector can deliver 150w of power, meaning your high-end card with two such connectors is pulling 300w via those cables, plus up to 75w from the PCIE slot it is plugged into. Now, an average graphics card doesn’t pull a full 375w even if equipped with that connector loadout, but it is capable of doing so if allowed, and enthusiast cards from add-in board partners often relax power limits or even increase them by adding additional connectors to allow for higher out-of-the-box performance, higher boost clocks, and creating more heat.

A total board power of 400w is a lot, however. It isn’t inherently bad – if your system has the headroom to allow a 400w graphics card and that is a tradeoff you are okay making, great! – but it does signal some potential issues. Firstly, for a card to need to push that hard on a new silicon process means that Nvidia is potentially pushing the gaming cards to the edge of usability for the sake of performance – perhaps as a response to a competitive threat. It could also point back to them using a less-reliable silicon process – some of the defects that might hit a yield rate could be leaky silicon that doesn’t meet specifications, but Nvidia could test those parts for a higher power limit due to that leakage and then sell them to gamers, who are generally less discerning about power usage in their gaming rigs. It could be a combination of those things – leaky silicon on an immature process technology being pushed to its absolute maximum to meet a potential competitive threat. For me personally, I intuitively get why this matters to some people (who wants a space-heater PC that needs higher fan speeds to stay at peak performance?) but at the same time, to me personally, this doesn’t matter as much. I am, in fact, perfectly willing to get a 400w card into my system if it measurably improves my gaming experience by a high measure. But to a growing population of gamers, that kind of thing does matter, and while lower-tier cards in the lineup should be less power-hungry, the top-end drives a lot of what people perceive about the lineup.

3 Slot Stock Cooler for the RTX 3090: There is a photo circulating the net that purports to show the RTX 3090 in full glory, as a 3-slot graphics card with a taller heatsink and PCB than the standard Nvidia founder’s edition and reference cards. This isn’t a huge surprise, given that many partner cards for the 2080 Ti often went to 3 slots straight away as a differentiator and gained performance for it, but to see Nvidia push that is interesting. It plays into the theory above about board power being pushed to a limit, as the kind of cooling a 3-slot, 2-fan, full-cover heatsink would provide is pretty high! It also raises a lot of questions about what kind of cards we’ll see from partners. In the RTX 2080 Ti lineup, it was common to see 3-fan axial designs, 3-slot heatsinks, both 3-slot and 3-fan designs combined, taller heatsinks, and longer cards with heatsinks that extend past the PCB. We also got more hybrid designs than just EVGA and MSI, with more manufacturers making custom cards with integrated closed-loop watercooling, which led to some fascinating designs like the ASUS RTX 2080 Ti Matrix, which basically put an entire closed-loop water setup into the GPU shroud! I’m most curious to see how many board partners adopt the V-shaped PCB design – if it is the sole Nvidia reference design, then most manufacturers wanting to meet launch deadlines will have to use it and build cooling and design around that, but I have also heard that Nvidia will have a traditional reference PCB with a standard shape available for partners to buy as well, which should mean that we’ll see an interesting mix of cards using the dense V-shaped card and traditional designs using standard partner coolers developed over the years (Asus, MSI, Gigabyte, and others have standard cooler designs they repeat constantly regardless of the product underneath it, which is why, for example, nearly all Asus Strix graphics cards have the same bulky, angular 3-fan cooler).

Overall, I’m really excited for this launch because it should mark an uptick in graphical fidelity for PC gaming and will kick-off a new competitive market with top-shelf solutions from both Nvidia and AMD, as the new consoles start to come to store shelves later this year. For me, I’m optimistic that I might be able to get an RTX 3090, provided that it offers sufficient performance gains over my 1080 Ti, which I could then resell while it maintains a good second-hand value. Mostly, despite my MMOs not really needing the full extent of the horsepower of such a card, they are nice when I play through my recent backlog and they do make other things I do work out easier (Blender renders, video renders, 3D modeling with real time previews). In general, though, a big part of why I am so fascinated with this launch in particular is that for as much as Nvidia is an avatar for all things wrong with free-market oriented thinking, at least this lineup promises unique and different approaches – new card designs, new cooler designs, completely different ways of thinking about how to deliver the physical card, and all of that is really fascinating to me. Now, all that is left is to see that performance is similarly interesting as a story.