Yesterday, a surprising announcement came down from Nvidia. Surprising both for its content but also the braggadocio on display – Nvidia is “unlaunching” the RTX 4080 12GB model, leaving next month’s launch to just the 16GB model, and then followed this news with photos of people lined up outside brick and mortar stores to buy the 4090 this week, a card that has been pretty consistently sold-out.

So, Nvidia’s hubris aside, what happened here?

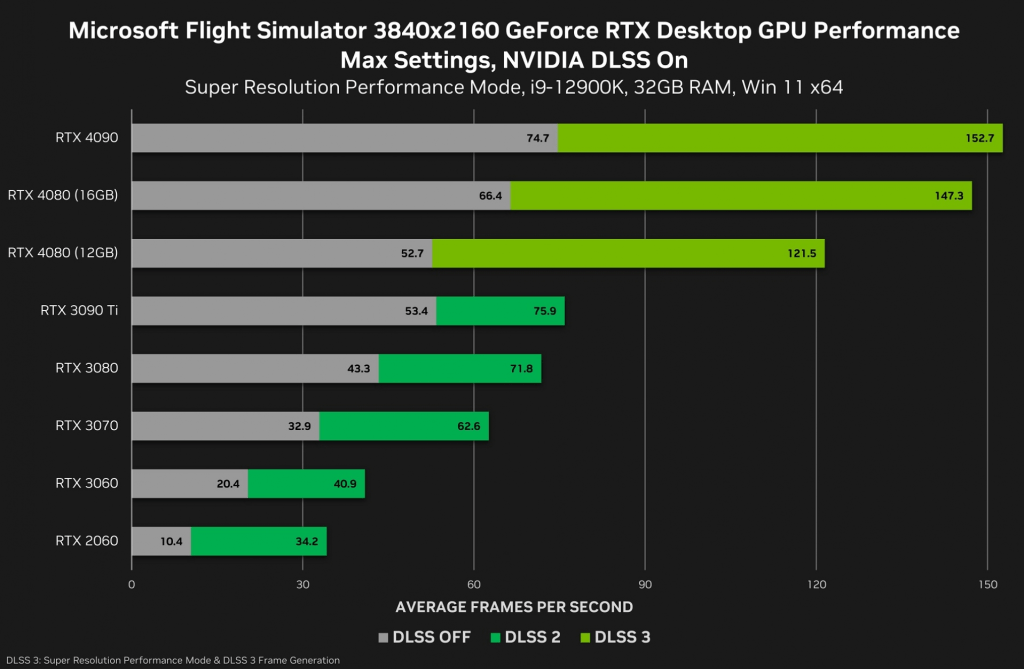

Firstly, I think we need to revisit the specs – it was obvious, painfully so, that the RTX 4080 12 GB represented what would have likely been a 4070 or even a 4060 in a more sane world and with a more sane Nvidia. A card in Nvidia’s traditional top-end, enthusiast lineup, with a 192-bit memory bus in 2022 was a bad taste in people’s mouths. The 4080 12GB being a cut-down GPU die with even less horsepower than the 16GB variant was also a bad move, reeking of deception as many consumers trust the model number to mean roughly equivalent performance with only VRAM size as a differentiator. Lastly, but perhaps most significantly, the pricing Nvidia was about to ask for this butchered GPU was fucking absurd – $900. While it had a small chance of beating the 3080 that followed it generationally, it would have been close in all likelihood, as the 4080 12GB that was planned had fewer CUDA cores and a smaller memory bus, both changes with offsets like faster clockspeeds and a significantly larger L2 cache on the GPU die of the new part, but it would have still been far too close for a generational upgrade. Numbers Nvidia released this week (conveniently after the 4090 was out in the wild, hmm) showed the 12 GB 4080 was only barely better than the 3080 without DLSS, which proves this point.

At this point, it felt fairly obvious that it was time to reconsider. I think Nvidia expected that there would be a less-informed consumer segment eager to snap up the higher model number card at the ludicrous asking price, but I never saw a single soul actually interested in the 4080 12GB model. It seemed like everyone in the world saw right through Nvidia’s awful scheme and called them out on it, and so the only logically-viable move was to pull the plug and move forward without the albatross of the 12GB card around their neck.

Yet this move also comes with some problems and concerns of its own.

Firstly, for the board partners who’ve been making this card for months now, they will be sitting on a pile of system-ready cards that are not being sold anymore. The likely answer from rumors going around is that the cards will be delayed and relaunched with a new model name, perhaps one which finally puts the card into an appropriate category for the expected performance. This process is painful, though, because it means taking the existing cards that are retail-ready and waiting, and either completely unboxing them to print new packaging and rebrand the hardware itself, some of which will have the card model printed or etched right onto the heatsinks, or simply slapping a sticker on the boxes and shipping them as-is. Fixing all existing cards to have proper branding and model numbering in every way is time-intensive and costly, but it also is probably the right play, as sticker badging means an unscrupulous scalper could peel the sticker and list the card as a 4080 to an unwitting sucker on a resale site. You’d hope that Nvidia would, in that case, be willing to cover the cost of this effort for their partners, but given the experiences that even Nvidia’s former flagship partner, EVGA, had to share about their relationship with the green giant, that might not be a foregone conclusion!

Secondly and also for the board partners, this move is likely to mean some models of the new GPU name (probably the 4070 like it should have been in the first fucking place) are going to skew profit downward on parts that are already very low margin. Nvidia’s partner guidelines often specify a minimum amount of spend for components not supplied by the company itself, like heatsinks, and while the actual Bill of Materials cost of the cards is likely not that close to the $900 retail price floor Nvidia has set, some manufacturers likely kitted the cards with high-cost cooling solutions to match the perceived value of the 80-series branding. There is no chance in hell that the rebranded cards sell at $900 retail, and so some board partners may end up eating low margins or even losing out on sales of the card. You’d hope that Nvidia would have measures to prevent this, like rebates or reduction in cost per unit for the base GPU and VRAM kits they sell to board partners, but expecting Nvidia to do something nice is not wise. Also, it is quite telling that the 4080 12 GB is the only card announced in the Lovelace lineup to start that does not have an Nvidia first-party Founder’s Edition card. Quite telling, that.

Thirdly, the question now remains how absurd the followup to this is. For right now, this is a good move – the 4080 12GB was anti-consumer, far too highly priced, and relied on the average consumer not reading up on the specs and reviews of their intended purchase. In the era before YouTube and social media, with magazine reviews coming a month or two after launch, this might have even worked! However, in the current state of things, letting the 4080 lineup launch later than the 4090 laid the lie bare – while the 4090 is a good card (with a ridiculous price tag in its own right), its benchmarks also reveal that Nvidia’s posturing during their announcement was, as ever, a show, and the actual numbers are not, in fact, 3-4 times faster than the prior generation, but instead increases of less than 100%, so not even twice as fast. The 4080 12GB would have been a clear loser, just from the benchmarks Nvidia loosed into the world this week showing non-DLSS numbers, still faster than its predecessor but only barely, and certainly not enough to justify the increased launch price as well as the increased price over the existing stock of 3080 models that are out in retail right now.

Nvidia will likely relaunch the same card as the 4070, but we get to do the whole dance over again with that model – what is the pricing? How many board partner models will be day-1 available that match the retail floor pricing? If it becomes a 4070, does it now get a Founder’s Edition model or are you going to shuffle this trash onto the partners and make it their problem? Will a 4070 priced at like, say, $600 or $700 be worthwhile? I still expect Nvidia to fly too close to the sun with the relaunched version of the card, but how close they get remains to be seen.

Fourthly, Nvidia wants to pretend that they care about customer “confusion” with the 12GB model’s cut-down chip, but this is not the first time Nvidia has done this exact move. They had two variants of the GTX 1060, one with the full specs and 6 GB of VRAM, and a 3 GB model with a cut-down GPU spec! They had two flavors of the GT 1030, one with GDDR5 memory and the other with DDR4 memory, the latter of which was substantially slower due to sharply reduced memory bandwidth, and there was no model distinction between the two, and no real price difference either! There was the GTX 970’s 4 GB of RAM, only 3.5 GB of which was full speed and which Nvidia had to settle a class-action lawsuit over! Again and again, Nvidia has shown a history of being willingly deceitful and conniving if they believe it can help them sell some hardware, and there’s no benefit of the doubt to be had here. If even 5% of the reception of the 12GB 4080 model had been positive, they would have tried to sell it as they were planning to, and the card would have sold to people who don’t obsessively research their tech purchases, and it likely would have worked out just fine for Nvidia, because they’ve never particularly cared about taking reputational hits. We’ll revisit that point again in a moment.

Lastly, Nvidia ending the announcement with photos of people lining up for the 4090 launch is just pure shitheaded hubris. Yes, a ton of people did lineup for that card – I’ve seen new-in-box scalped results all over the internet marked up as high as $2,500. And honestly, here’s the catch – for a certain audience, the 4090 is an ideal card worth having. Is it for gamers? Probably not – the card is absolute overkill above and beyond what it needs to be. But for artists and professionals doing 3D design work, rendering, and the like? The RT uplift with the 4090 in particular makes it a strong card for that market. The only reason I’ve considered the idea of even wanting one is as a boost to productivity in 3D modeling and rendering, because the viewport performance of the card in Blender, Unreal Engine 5, and the like is way faster than the prior gen offerings. I’m not going to get one, but in that light it is pretty tempting! But the 4080 12GB has little of the sauce that helps those performance improvements manifest, and for gamers it represents, at best, a 20% improvement over the baseline 3080 at 4K resolution without DLSS. There’s just nothing there to justify what Nvidia had asked for. Nvidia, of course, has to present this as a stable-minded and planned decision and not like a trial balloon that exploded in their faces, so they sell hard how great the 4090 sales have been.

The problem is that it will work, too. Most people I know have what I would call an adversarial relationship with Nvidia, including myself. I loathe Nvidia the company, and I’ve said that pretty plainly on multiple occasions here. Nvidia, however, for as detestable a company as they are, makes the current best graphics hardware at most higher-end pricing segments and has robust software support both in games and in creative applications. For me as a Blender enthusiast, the only GPU brand that makes sense is Nvidia, because the Optix features in Blender make real-time raytracing in the viewport while modeling work well and accelerate finished-product renders a lot. While Blender in particular has added AMD GPU support in the 3.x update cycle, the Nvidia Optix render path simply works better, and Nvidia ships their Studio drivers with specific optimizations for creative applications. NVENC is the best GPU-based video encoding solution for streaming in terms of balancing quality and system impact. While Nvidia’s more recent drivers have been fraught with some issues (I had a specific BSOD crash that was due to Nvidia drivers and web video playback, and there was the Year of Flickering Shadows in World of Warcraft due to Nvidia drivers), they’ve generally been the best of the 3 major GPU vendors at this point with driver stability. They have less of the “fine wine” phenomenon that AMD does, where the GPUs gain double-digit percentage performance due to driver updates, but there is decent gain in performance with drivers over-time and while fine wine is nice to a point, I think it is generally better that Nvidia’s driver updates for new game releases are highly-optimized out of the gate and so there’s less to squeeze out in subsequent updates.

So now, I guess we all wait for, in all likelihood, CES in January, to see just how Nvidia tries to salvage this bungle.

On top of the physical unboxing and repackaging, the partners are also going to have to reflash the chips on the cards to re-identify them as whichever line they end up being. I expect 4070 as well, or even an eventual 4070ti if the margins are too thin to jump that far back, I’d be beyond surprised if these end up as far down the line as a 4060 branding, but I guess you never know.

I expect I’ll be skipping this generation entirely, but I am leaving the door open to taking another look when the 4080ti rolls around.

LikeLiked by 1 person

That’s a great point – I had forgotten about the need to flash the BIOSes, which is just even worse still for the board partners. I generally agree with the 4070 idea, because I think they’re not going to try to push it too far down the stack, but I keep getting stuck on that absolutely dumb memory bus decision being a traditional xx60 choice!

I’m of two minds on this generation. On the one hand, right now, the 4090 looks damn good for 3D work, which I am doing more of, even just for viewport performance alone. For gaming, this generation is a hard pass for me until either a big price drop or just sitting it out for the 5000 series. I can’t justify a 4090 for now even for 3D work (for the same price I could get an incredible drawing tablet to use for modeling and sculpting work which would be more valuable to me ATM), but the thought is entertaining after I just watched a test level in Unreal Engine 5 take almost 10 minutes to cook!

LikeLiked by 1 person

Okay, with a post title like that (and referencing the movie of the same name), I guess somebody has to say it: “Awlriight Awlriight Awlriight…”

LikeLiked by 2 people

And here I thought I was the only one who remembered that movie!

LikeLike

Not the first or last time that Nvidia plays with consumers ..

LikeLike